|

6/13/2023 0 Comments Dragonframe

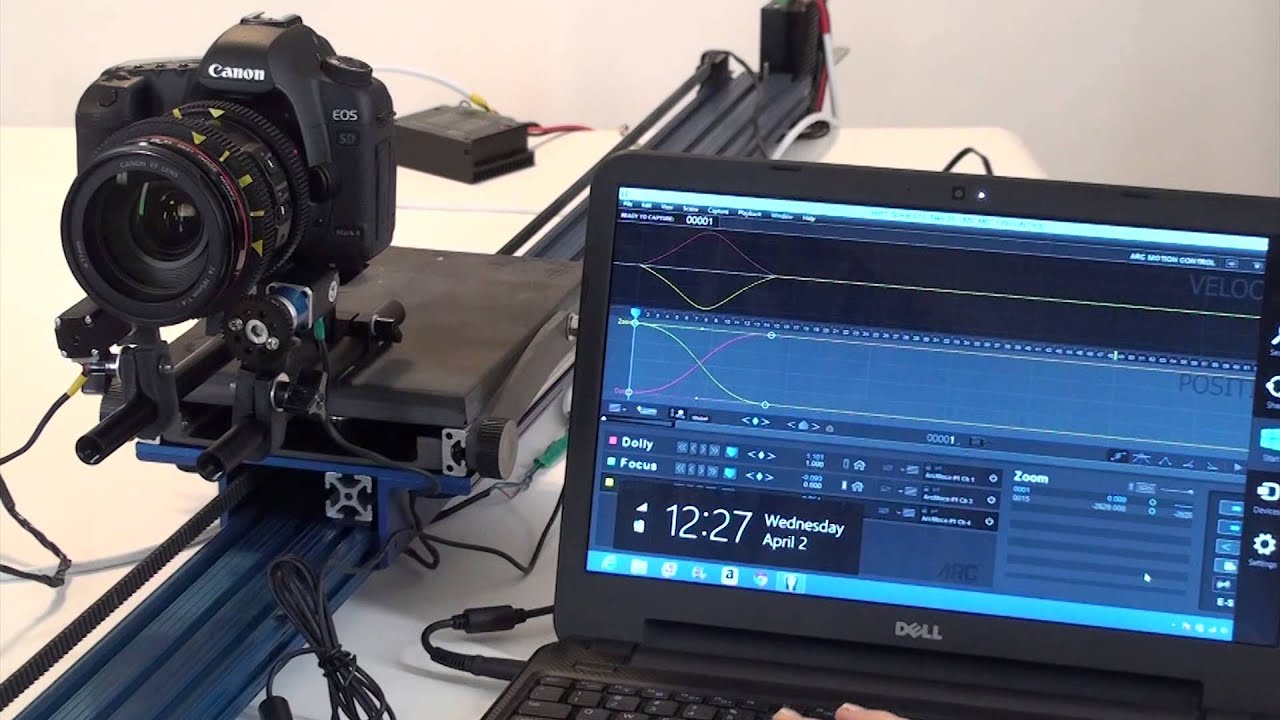

We get instant gratification or despair if the shot isn’t working, but we know immediately if we need to reshoot or move on. With digital, we get to review a shot while it’s in progress. When we shot on film, we’d do the set-up, animate the shot plus any additional or multiple passes, then send the film off to the lab and wait to see to see the results the next day (if we were lucky enough to get it to the lab on time). In general, digital tools are a time saver. Above and below: concept art for “Alien Xmas” (interior above by Christina Yang) (Netflix / © 2020) On Alien Xmas, we used the Canon EOS R body with Nikon prime lenses.

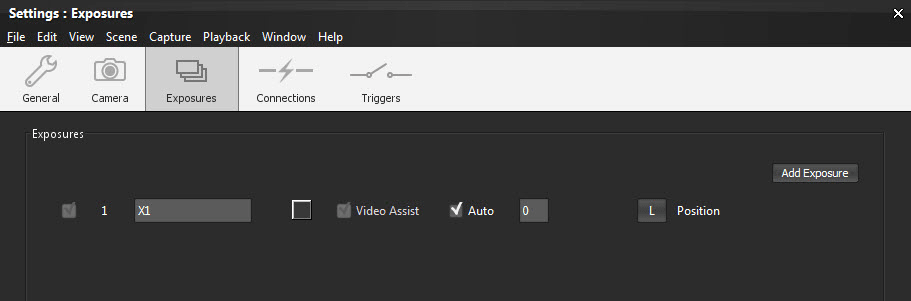

It works with both consumer and professional cameras. We don’t think there is a major manufacturer that doesn’t have some sort of compatibility with the software. Now if we could only get it to actually animate the shot for us.ĭragonframe seems optimized for Canon DSLRs, but it works with a variety of other cameras as well. On Alien Xmas, we were fortunate to work directly with Canon and Dragonframe as beta testers on a firmware update that allowed the preview to be at HD resolution there were also some other improvements to the camera control features. The software is usually ahead of the curve on improvements, but we always wanted a higher-resolution preview image. It continues to increase its capability by listening to its users and incorporating their ideas, and by keeping up to date with the new emerging technologies on the camera and hardware side of things. We think it has risen to its premier position largely because it was created by professionals working in the field of stop-motion animation: animators, directors of photography, and software developers with first-hand knowledge of what it takes to create animation. Above and below: the “Alien Xmas” shoot (Netflix / © 2020)ĭragonframe has combined all those features and added the direct capture function. The only drawback with the Lunchbox was that it couldn’t capture at a resolution that was suitable for high-resolution or feature work. That ability was huge if you needed to cut back into a shot and make a change without having to restart from the beginning. Not only could you toggle between the live frame and the last stored frame, you could play back the entire shot while in progress and step through as needed.

The next evolution was Video Lunchbox, which allowed us to capture and store all the frames as they were taken. It was a huge leap forward to be able to see that progression between the frames. We then added a video switcher which allowed us to capture and store a frame, and dissolve between the live frame and that stored frame. Crude - but it was extremely helpful in seeing the performance emerge. This would allow us to view the live frame on a monitor, and by drawing on it, we were able to track and chart the desired movement. Prior to switching over to digital camera, we would use video assist cameras on our film cameras. We also used it for multiple complicated lighting effects that could only be accomplished by shooting multiple passes on each frame. The ability for the animator to press one button and have the program turn the proper lights and on and off and take the frames automatically is a big time-saver, and greatly reduces the chances of mistakes or forgotten elements. For Alien Xmas, the ability to program multiple-frame capture in conjunction with the DMX control for our front-light/back-light stages was huge. So deep that it’s easy to overlook all the aspects we now take for granted. It’s become a very deep stop-motion studio package.

Over time, it has increased its list of compatible cameras, enhanced cinematography capabilities, motion-control interface, DMX control, multiple-frame shooting, automated 3d capture, and sophisticated post-frame editing and re-sequencing. The program covered all the basic needs to do digital stop-motion animation. We can’t remember when exactly we started using Dragonframe - probably somewhere around 2008–09.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed